February 2023 BenGoldhaber(.com) Newsletter

Notes from the road, forecasting the future of forecasting, and bees

Hola,

I was on the road a lot in February, spending time in NYC, attending a Bay Area conference/retreat, and visiting family in San Antonio. Here are some recommendations culled from my travels:

NYC: Check out House of Machine. It’s a new cafe/bar that opened up next to my friend’s apartment in Chinatown. I recommend going late at night, during a winter storm, when your friend wants his apartment to himself for a romantic dinner date. Get their mulled wine. Be the only person in the bar; offer to cover the bar while the bartender goes out for a smoke. Join him out front and have a genuine, authentic conversation discussing life in the city and what it’s like dating in your thirties and forties. Go back in and order more mulled wine.

For lunch or dinner, try wolfnights, they have great wraps.

SF: The Russian Banya is underrated; I’m surprised I had never been while I lived in the city. A good shvitz is a delight - this is something eastern European culture has absolutely perfected. Avoid getting into competition with friends over who can stay longest in the cold pool. You’re not going to win, and I’d argue in a larger sense nobody will. Go back to one of the hot saunas and try to discuss what to do about AI in the sixty seconds of lucidity before the heat forces you to actually be in your body.

San Antonio: No alpha from me on San Antonio - I continue to only know about the riverwalk. But it remains nice, a fun way to spend a Sunday morning. There’s a great ice cream place there that has a cake batter flavor you can justify eating at 10:30am because you’re hanging out with the next generation of the family, four to seven year olds who all have an absurdly high level of energy for that time of day.

One takeaway from a lot of travel: I need to accept the fact that when playing at being a digital nomad, I incur like a 50% productivity hit - despite blocking off time to work it never actually feels like the right thing to do when in a new city.

#forecasting

I participated in a Swift Centre forecasting session on the the risk of Avian Bird Flu in 2023. In general we estimated a low risk of an Avian Flu outbreak in 2023, but conditional on 100 detected human-human cases, the consensus was a high risk of a deadly global pandemic.

I’m impressed with how well the Swift Centre turned our forecasts and reasons into an easy to read, beautiful post that conveys not just point estimates but, importantly the underlying model.

Separately, a forecaster and I did an impromptu livestream, forecasting a set of questions written by Lars Doucet on the likely outcomes of the death of the open web (related to my essay last month). Lars ended up joining us on the stream who was fun to chat with.

Working with the Swift Centre has prompted some renewed personal reflection on the future of forecasting. In my opinion, it’s going to be based around large language models as an interface to general prediction systems. Individual forecasters and prediction markets/tournaments will generate the knowledge graphs and training data that LLMs will consume. In turn, LLMs will generate potential explanations and models underlying the questions, which forecasters can use as an aid in thinking through other questions, and which the public will be able to query to finally achieve the dream of applied forecasting: the ability to ask and receive an answer to the crucial question of ‘what’s going to happen’.

To elaborate, applied forecasting has long been limited by the structure and medium of tournaments and markets - dozens of questions that are tied to a single point estimate. Real world decision making is the product of a person’s general sense and gestalt of a domain, which in turn is the product of thousands of data points. Essays, books, and conversations are the ways in which people interact with these full ‘models’ and improve their own beliefs.

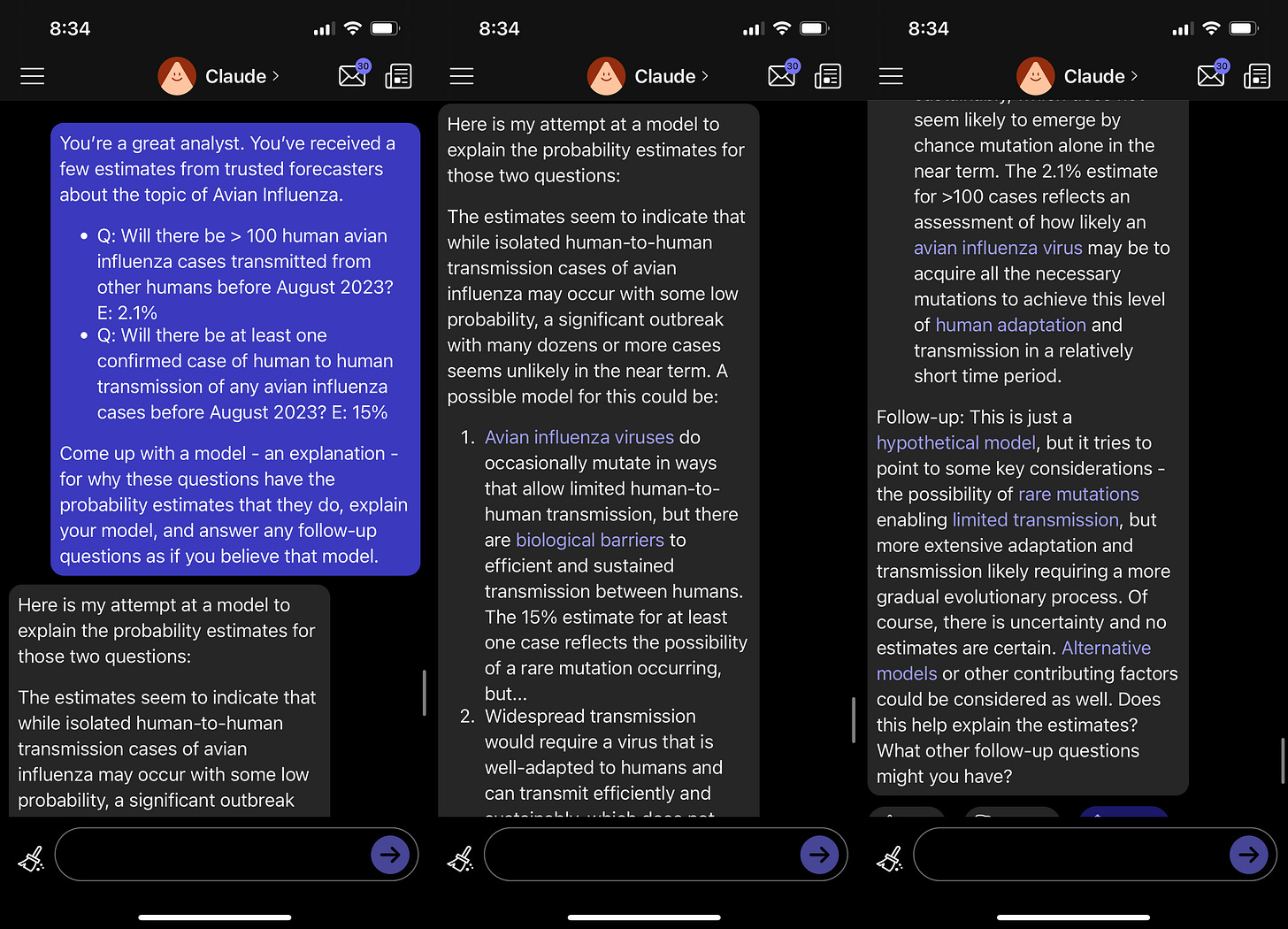

This is where language models shine - they’re great at taking facts and extrapolating (hallucinating) them into a, seemingly at least, connected viewpoint. For instance I had Claude, the Anthropic GPT bot, create an ‘explainer’ report for sample avian influenza questions.

I think in this case the hallucinations of LLM - their tendency to conjure up possible reasons for something - are a feature, not a bug. Given a couple of different questions and probabilities, the LLM can simulate a plausible explanation that connects them.

Yes, you risk it having the ‘wrong’ explanation, but the value comes from putting the questions in a context where it’s easier for a person to read and understand them. Ideally you’d generate multiple potential explanations, treating them as an ensemble of potential explanations for why a given set of probabilities. That’d be a great tool for scenario planning and generating additional forecasts.

Unlike a lot of pie-in-the-sky forecasting projects, this is directly on the mainline tech path for LLMs. There’s a lot of energy and money going into AI integrations for data warehouses, to act as a general natural language layer for the state of a company - Advances there will translate into advances for forecasting.

I remain excited by forecasting because it's the best practical expression I’ve found of idealistic, enlightenment style principles. Yes, we can in fact know things about the world, we can through practice get better at the art of knowing things, and we can through technology and collaboration with others collectively advance knowledge. Reject post-modernist nihilism, embrace quantified uncertainty.

Prediction: Before the end of the year someone will integrate a large language model with a forecasting site to generate explanations and commentary on questions - I will personally find it useful - 80%.1

#links

Probability Mass App: A useful tool for forecasting and estimating, put together by a friend, you can easily visualize different scenarios by clicking and setting tiles on a grid that sum to the complete probability.

People can read their manager’s minds: Employees are very good at sussing out the actual type of work a manager cares about, and orient themselves towards that, as opposed to what a manager says they care about or the outcomes a manager nominally cares about.

If something is rotten in an org, the root cause is a manager who doesn't value the work needed to fix it. They might value it being fixed, but of course no sane employee gives a shit about that. A sane employee cares whether they are valued.

A cool theory on why we dream: dreams might be the brain giving us additional training data to help prepare for novel experiences.

Cultural changes: Over the past three decades, the percentage of high schoolers who have been in a physical fight has halved. I’m kinda shocked by the steepness of the decline, and it raises tons of questions - how much of this is because of technology? I would’ve bet that more time spent online was driving this, as it’s harder to get into a physical confrontation when you’re not physically proximate to someone, but well before the internet ate the world physical conflicts were on the decline. Is this first and foremost a triumph of socialization - aka the anti-bullying drives actually worked? And to what extent has the secular decline in testosterone influenced this?

Language models by number of bees. A person is about a million bees.2

Every time I look at this image, I think of my favorite Arrested Development bit.

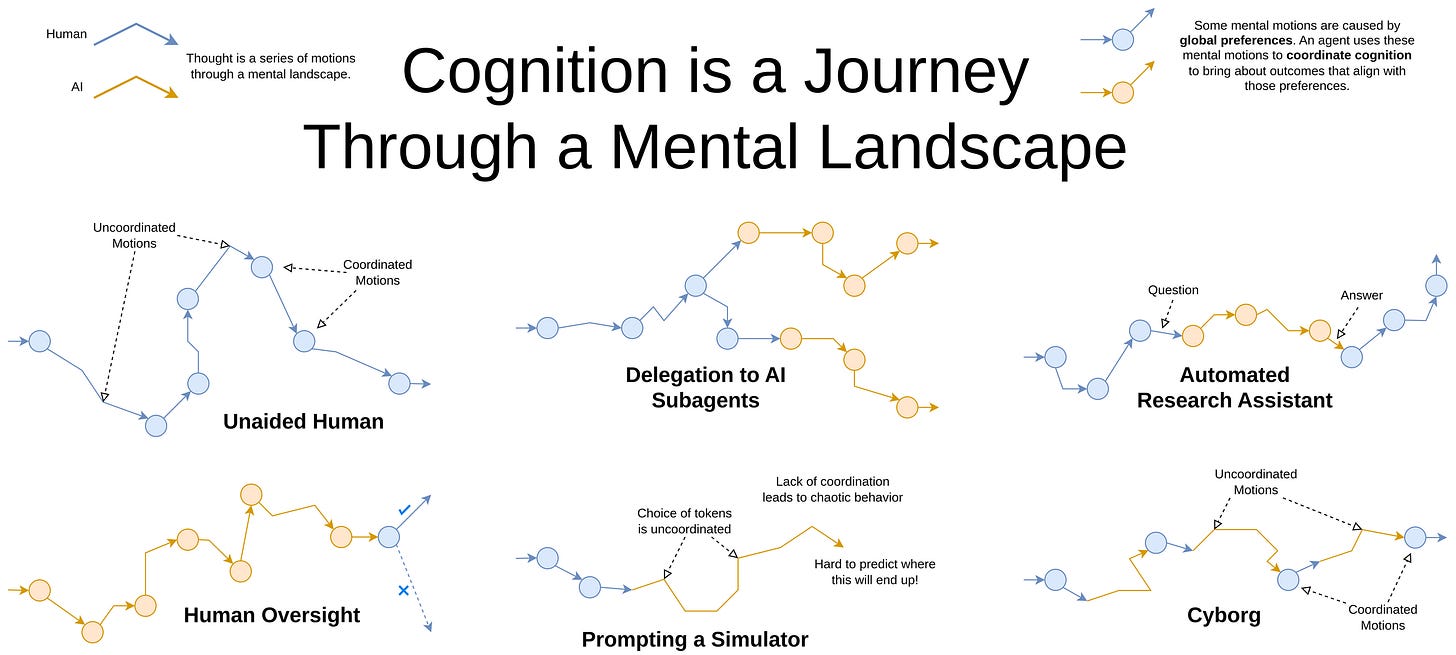

Cyborgism: A provocative proposal by the pseudonymous author Janus for human in the loop AI systems, where technologists use tools like GPT to rapidly increase their output, without fully outsourcing their decision making.

I find this very intriguing, if not entirely convincing. Tool AI has a tendency to become Agent AI, and the distinction seems very blurry; still, more experiments with AI workflows that augment human decision making without replacing it seems straightforwardly good. The forecasting workflow I outline above, which uses GPT to generate possible explanations which a person can run with, is in a sense a type of cyborgism.

GPT 3.5 displaying emergent theory of mind: More advanced models are better at modeling other people’s inner worlds.

We administer classic false-belief tasks, widely used to test ToM in humans, to several language models, without any examples or pre-training. Our results show that models published before 2022 show virtually no ability to solve ToM tasks. Yet, the January 2022 version of GPT3 (davinci-002) solved 70% of ToM tasks, a performance comparable with that of seven-year-old children. Moreover, its November 2022 version (ChatGPT/davinci-003), solved 93% of ToM tasks, a performance comparable with that of nine-year-old children.

#content

A Thousand Li: A book series about a Taoist warrior training in the mystical arts. From that overview you can probably guess that it’s fun, light fiction, but as the friend who recommended it noted, the author does a reasonable job conveying the Taoist philosophy and attitude through the protagonist’s mystical martial arts odyssey. I’m enjoying it.

Succession: I finally got around to binge watching the first season with a friend3 - compelling. It’s rare to watch a show about business that has compelling drama and feels, at least so far, ‘realistic’. And that theme song is catchy.

Accidental Gods: The story of the people who, over the past few hundred years, have inadvertently found themselves deified, and what that means about societies and us. Maybe the most original premise for a non-fiction book I’ve encountered. Deification seems to be an important way people inspire mass collective action.

To deify a man is to look for him everywhere. It is to search every source and sign, to wade into the flows of information and events with the solemnity of a baptizee, headed underwater.

The World of Yesterday: I haven’t finished it yet, but the author’s description of the world he grew up in - a childhood in the Hapsburg empire, a place that seemed immortal, that seemed and how radically different it was from the time he was writing (1940’s Europe), is in fact shocking. He recounts, in beautiful prose, his youth in the Hapsburg empire.

Youth, like certain animals, possesses an instinct for change of weather, and so our generation sensed, before our teachers and our universities knew it, that in the realm of the arts something had come to an end with the old century.

Have an excellent March.

Ben

I’d count this as resolving positively if the site itself integrates it or if someone creates a dedicated application using tournament/market questions, and I end up using it multiple times as an input for future forecasts.

A huge number of those bees are dedicated to vision processing - from a cursory investigation I saw estimates ranging between 20% and 60% of human neurons are vision related.

I’m noticing I can’t watch show like this on my own. I’ve tried watching the Last of Us, and same thing, I need someone else in the room, otherwise it feels… off? Like the unpleasant, cringe, tragic parts are too unpleasant. IDK why, but I guess this is an ask for you to invite me to watch parties.